| |

// testKmlp.cpp

// https://forejune.co/cuda/

#include <iostream>

#include <iomanip>

#include <cmath>

#include "kmeans.h"

#include "mlp.h"

using namespace std;

const int screenWidth = 700;

const int screenHeight = 600;

void graphics(int argc, char *argv[]);

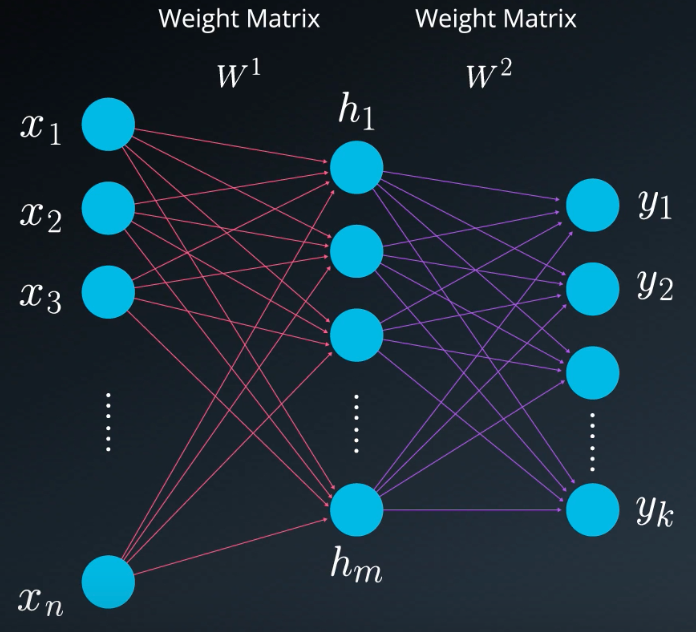

int classifier( double y[])

{

int value = 0;

double max = y[0];

for (int i = 1; i < K; i++) {

if (y[i] > max) {

max = y[i];

value = i;

}

}

return value;

}

bool overlap(const vector< Point> & centroids)

{

int n = centroids.size();

for (int i = 0; i < n; i++)

for (int j=i+1; j < n; j++)

if ( abs(centroids[i].x - centroids[j].x) < 0.01 &&

abs(centroids[i].y - centroids[j].y) < 0.01 )

return true;

return false;

}

// cluster the points using MLP

void useMlp(MLP &mlp, vector< Point> &points, int delta)

{

int n = points.size();

double x[3];

x[0] = 1; //bias

int j = 0;

for (int i = 0; i < n; i+=delta) {

x[1] = points[i].x;

x[2] = points[i].y;

double *output = mlp.forward(x);

cout << fixed << setprecision(2) << " " << x[1] << " " << x[2]

<< " : " << classifier(output) << "" << " (" << setprecision(1);

for(int k = 0; k < K; k++) {

cout << output[k];

if (k < K - 1)

cout << " ";

else

cout << ")" << "\t";

}

points[i].cluster = (int) classifier(output);

if ( ++j % 2 == 0 ) cout << endl;

}

}

MLP mlp(0.5);

vector< Point> centroids(K);

int main(int argc, char *argv[])

{

srand(time(0));

const int numSamples = 900;

int iterations = 50; // Number of K-means iterations

// Generate data

vector< Point> points;

for (int i = 0; i < numSamples; ++i) {

Point p;

p.x = (float) (rand() % screenWidth) /screenWidth;

p.y = (float) (rand() % screenHeight) / screenHeight;

points.push_back(p);

}

int c[K];

// Randomly initialize centroids from points

do {

for (int i = 0; i < K; i++) {

c[i] = rand() % points.size();

centroids[i] = {points[c[i]].x, points[c[i]].y};

}

} while (overlap(centroids));

kmeans(points, centroids, iterations);

int delta = 30;

//output results

int k = 0;

for (int j = 0; j < points.size(); j+=delta ) {

cout << fixed << setprecision(2) << "(" << points[j].x << ", "

<< points[j].y << ") : " << points[j].cluster << "\t";

if ( ++k % 2 == 0 ) cout << endl;

}

cout << endl;

cout << "Training the MLP ..." << endl;

mlp.train(points, 10000);

// Test the MLP

cout << "Testing MLP:" << endl;

useMlp(mlp, points, delta);

getchar();

graphics(argc, argv);

return 0;

}

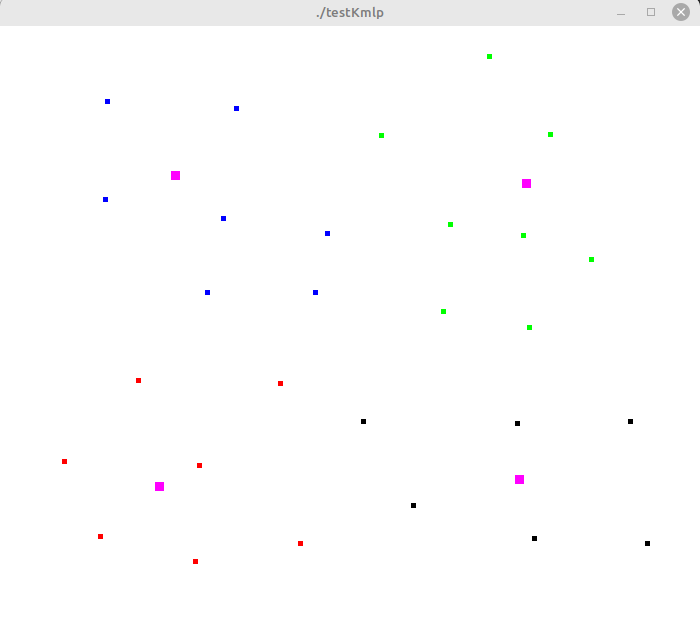

// graphics routines

#include <GL/glut.h>

//vectors to store points

vector< Point>pointVec;

void init()

{

glClearColor(1, 1, 1, 1); //clear color buffer with white color

glClear(GL_COLOR_BUFFER_BIT); //clear color buffer

//define coordinate system

glMatrixMode(GL_PROJECTION);

glLoadIdentity();

gluOrtho2D(0, screenWidth, 0, screenHeight);

glPointSize( 3 );

glColor3f(0.0, 0.0, 0.0); //draw with black color

}

void display()

{

//flush out data to color buffer

glFlush();

}

//draw one point

void drawDot(int x, int y)

{

glBegin(GL_POINTS);

glVertex2i(x, y);

glEnd();

glFlush();

}

//display cluster points with different colors and centroids

void displayClusters( vector< Point> &points)

{

glClear(GL_COLOR_BUFFER_BIT); //clear color buffer

//display data points

int n = points.size();

int k = centroids.size();

glPointSize( 5 );

for (int i = 0; i < n; i++) {

int c = points[i].cluster;

float color[3] = {0}; //display color (R, G, B)

if ( c < 3 )

color[c] = 1; //Red, green or blue

//fourth color is black

glColor3fv(color);

drawDot(screenWidth*points[i].x, screenHeight*points[i].y);

}

//display centroids

glPointSize( 9 );

glColor3f(1, 0, 1); //magenta

for(int j = 0; j < k; j++)

drawDot(screenWidth*centroids[j].x, screenHeight*centroids[j].y);

glColor3f(0, 0, 0); //restore black color

glPointSize( 3 );

glutPostRedisplay();

}

void keyboard(unsigned char key, int x, int y)

{

switch ( key ) {

case 27: //escape

exit( -1 );

case 'd':

displayClusters(pointVec);

break;

case 'm':

useMlp(mlp, pointVec, 1);

break;

}

}

void mouse(int button, int state, int mx, int my)

{

int x = mx, y = screenHeight - my;

if ( button == GLUT_LEFT_BUTTON && state == GLUT_DOWN ){

drawDot(x, y);

Point pt = {(double) x / screenWidth, (double) y / screenHeight};

pointVec.push_back( pt );

}

glFlush();

}

void graphics(int argc, char *argv[])

{

glutInit(&argc, argv); //Initialization

glutInitDisplayMode(GLUT_SINGLE | GLUT_RGB );

glutInitWindowSize(screenWidth, screenHeight);

glutInitWindowPosition(59, 50);

glutCreateWindow( argv[0] );

init();

// specifies calback functions

glutKeyboardFunc( keyboard );

glutMouseFunc( mouse );

glutDisplayFunc( display );

glutMainLoop(); //go into perpetual loop

}

|