// mlp3.cpp

// https://forejune.co/cuda/

#include <cmath>

#include <stdlib.h>

#include <stdio.h>

#include <iostream>

#include "mlp3.h"

using namespace std;

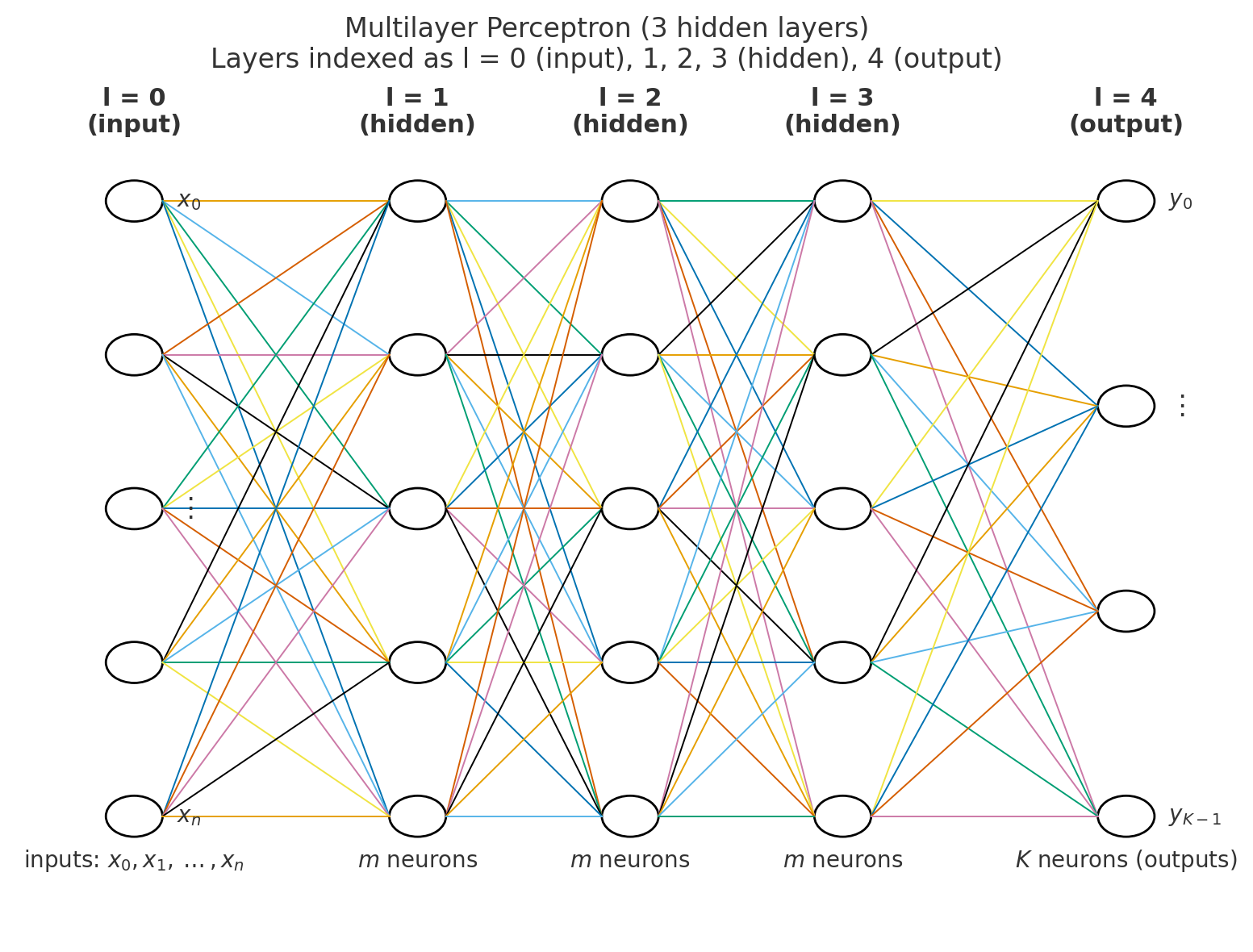

void set_yzaCapacity( vector< double> &y, vector< vector< double>> &z, vector< vector< double>> &a,

const vector< int> &sizes, const int L)

{

int K = sizes[L]; //output size

y.reserve( K ); //set capacity of y to K, i.e. y[K]

z.reserve( L+1 ); // number of rows

for (int l = 0; l < L + 1; l++){

vector< double>row;

row.reserve(sizes[l]);

z.push_back(move(row));

}

a.reserve( L+1 ); // number of rows

for (int l = 0; l < L + 1; l++){

vector< double>row;

row.reserve(sizes[l]);

a.push_back(move(row));

}

}

void setWeightCapacity( vector< vector< vector< double>>> &w, const vector< int> &sizes, const int L)

{

w.reserve( L );

for ( int l = 0; l < L; l++) {

vector< vector< double>>row;

row.reserve( sizes[l] );

for(int i = 0; i < sizes[l]; i++){

vector< double>col;

col.reserve( sizes[l+1] );

row.push_back(move(col));

}

w.push_back( move(row) );

}

}

void initializeWeights( vector< vector< vector< double>>> &w, const vector< int> &sizes, const int L)

{

for (int l = 0; l < L; l++) {

for (int i = 0; i < sizes[l]; i++)

for (int j = 0; j < sizes[l+1]; j++){

w[l][i][j] = rand() % 1000 / 1000.0;

if ( rand() % 2 == 0 )

w[l][i][j] = -w[l][i][j];

}

}

}

// constructors

MLP::MLP()

{

}

MLP::MLP(double learning_rate)

{

if ( n1 > maxNodes || m1 > maxNodes || K > maxNodes){

cout << "A layer has exceeded max number of nodes allowed!" << endl;

exit( 1 );

}

eta = learning_rate;

sizes.push_back( n1 );

for (int i = 0; i < H; i++)

sizes.push_back( m1 ); // sizes[i] = m1;

sizes.push_back( K ); // sizes[L] = K; size of output layer

setWeightCapacity(w, sizes, L);

set_yzaCapacity(y, z, a, sizes, L);

initializeWeights(w, sizes, L);

}

MLP::MLP(double learning_rate, int n10, int K0, vector< int>hidden)

{

H = hidden.size();

if ( n1 > maxNodes || K > maxNodes){

cout << "Input or Output layer has exceeded max number of nodes allowed!" << endl;

exit( 1 );

}

for (int i = 0; i < H; i++){

if ( hidden[i] > maxNodes ) {

cout << "A hidden layer has exceeded max number of nodes allowed!" << endl;

exit( 1 );

}

}

eta = learning_rate;

n1 = n10;

K = K0;

sizes.push_back( n1 );

for (int i = 0; i < H; i++)

sizes.push_back( hidden[i] ); // sizes[i] = m1;

sizes.push_back( K ); // sizes[L] = K; size of output layer

L = H + 1;

setWeightCapacity(w, sizes, L);

set_yzaCapacity(y, z, a, sizes, L);

initializeWeights(w, sizes, L);

}

//Sigmoid activation function

double MLP::f(double x)

{

return 1.0 / (1.0 + exp(-x));

}

//Derivative of sigmoid function

double MLP::fd(double x)

{

double s = f(x);

return s * (1 - s);

}

//Forward propagation

double* MLP::forward(double x[])

{

for(int i = 0; i < n1; i++)

a[0][i] = z[0][i] = x[i];

for(int l = 0; l < L; l++) {

int left = sizes[l]; //size of left side layer

int right = sizes[l+1]; //size of right side layer

for(int j = 0; j < right; j++) {

z[l+1][j] = 0;

for(int i = 0; i < left; i++)

z[l+1][j] += w[l][i][j] * a[l][i];

if ( l < L-1 && j == 0 ) //hidden layer bias

a[l+1][j] = 1;

else

a[l+1][j] = f( z[l+1][j] );

}

}

for (int k = 0; k < K; k++)

y[k] = a[L][k];

return (double *) &y[0]; //return output

}

//Backward propagation, yd is the desired output

void MLP::backward(double yd[], double x[])

{

double delta[L][maxNodes]; //hidden layers

for (int k = 0; k < K; k++) {

double e = yd[k] - y[k]; //output layer error

delta[L-1][k] = e * fd(z[L][k]); // can use fd(a[L][k]) also

}

for(int l = L - 1; l >= 1; l--){

int left = sizes[l]; //size of left layer

int right = sizes[l+1]; //size of right layer

for (int j = 0; j < left; j++) {

delta[l-1][j] = 0;

for (int k = 0; k < right; k++)

delta[l-1][j] += w[l][j][k] * delta[l][k];

delta[l-1][j] *= fd( z[l][j] );

}

}

//Update weights

for (int l = 0; l < L; l++) {

int left = sizes[l];

int right = sizes[l+1];

for(int i = 0; i < left; i++)

for(int j = 0; j < right; j++)

w[l][i][j] += eta * delta[l][j] * a[l][i];

}

}

// Train the perceptron

// eta = learning rate

// epochs = number of times of training

// numSamples = number of different input sets (x_data)

// yd is target output

void MLP::train(const database &db, int epochs)

{

double x[n1]; //inputs

double yd[K]; //desired output

int numSamples = db.inputs.size();

cout << "numSamples=" << numSamples << endl;

for (int epoch = 0; epoch < epochs; epoch++){

for (int k = 0; k < numSamples; k++) {

for (int i = 0; i < n1; i++)

x[i] = db.inputs[k][i];

for (int i = 0; i < K; i++)

yd[i] = db.labels[k][i];

forward( x );

backward(yd, x);

}

}

}

int MLP::inputSize()

{

return n1;

}

int MLP::outputSize()

{

return K;

}

// save weights and parameters

int MLP::saveWeightsAndParam(char fname[])

{

FILE *fp;

if ( (fp = fopen(fname, "wt")) == NULL ) {

printf("\nError opening file %s\n", fname);

return -1;

}

fprintf(fp, "%6.3f \n", eta);

fprintf(fp, "%d\n", L);

for (int l = 0; l < L+1; l++)

fprintf(fp, "%d ", sizes[l]);

fprintf(fp, "\n");

for (int l = 0; l < L; l++) {

int left = sizes[l];

int right = sizes[l+1];

for(int i = 0; i < left; i++) {

for(int j = 0; j < right; j++)

fprintf(fp, "%7.4f ", w[l][i][j]);

fprintf(fp, "\n");

}

}

fclose( fp );

return 1;

}

int MLP::readWeightsAndParam(char fname[])

{

FILE *fp;

if ( (fp = fopen(fname, "rt")) == NULL ) {

printf("\nEroor opening file %s\n", fname);

return -1;

}

fscanf(fp, "%lf \n", &eta);

fscanf(fp, "%d", &L);

for (int l = 0; l < L+1; l++){

int sz;

fscanf(fp, "%d ", &sz);

sizes.push_back( sz );

}

n1 = sizes[0];

K = sizes[L];

setWeightCapacity(w, sizes, L);

for (int l = 0; l < L; l++) {

int left = sizes[l];

int right = sizes[l+1];

for(int i = 0; i < left; i++) {

for(int j = 0; j < right; j++)

fscanf(fp, "%lf ", &w[l][i][j]);

}

}

fclose( fp );

set_yzaCapacity(y, z, a, sizes, L);

return 1;

}

MLP::~MLP()

{

}

|